EGOK360

A 360 EGOCENTRIC KINETIC HUMAN ACTIVITY VIDEO DATASET

TXSTATE-CVML-Lab | Texas State University

Keshav Bhandari, Mario A. DeLaGarza, Ziliang Zong, Hugo Latapi[Cisco,USA]

DOWNLOAD DATASET [ONEDRIVE]

DOWNLOAD DATASET [DATAVERSE REPO]

DOWNLOAD DATASET JSON LIST [DATAVERSE REPO]DOWNLOAD DATASET DOWNLOADER SCRIPT [VIA DATAVERSE REPO]| PYTHON 3.6+

DOWNLOAD PAPER

DOWNLOAD DATA LIST CREATOR [TRAIN TEST SPLITTER]| PYTHON 3.6+

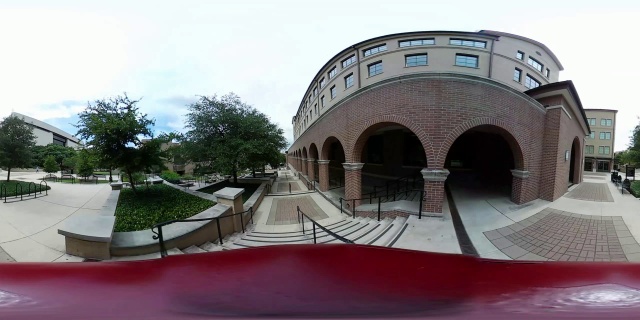

sample

sample